Edition 0

smb.conf Filehttpd httpd.conf /etc/openldap/schema/ Directory/etc/sysconfig/ Directory/etc/sysconfig/arpwatch /etc/sysconfig/authconfig /etc/sysconfig/autofs /etc/sysconfig/clock /etc/sysconfig/desktop /etc/sysconfig/dhcpd /etc/sysconfig/firstboot /etc/sysconfig/i18n /etc/sysconfig/init /etc/sysconfig/ip6tables-config /etc/sysconfig/keyboard /etc/sysconfig/named /etc/sysconfig/network /etc/sysconfig/ntpd /etc/sysconfig/radvd /etc/sysconfig/samba /etc/sysconfig/selinux /etc/sysconfig/sendmail /etc/sysconfig/spamassassin /etc/sysconfig/squid /etc/sysconfig/system-config-users /etc/sysconfig/vncservers /etc/sysconfig/xinetd /etc/sysconfig/ DirectoryMono-spaced Bold

To see the contents of the filemy_next_bestselling_novelin your current working directory, enter thecat my_next_bestselling_novelcommand at the shell prompt and press Enter to execute the command.

Press Enter to execute the command.Press Ctrl+Alt+F1 to switch to the first virtual terminal. Press Ctrl+Alt+F7 to return to your X-Windows session.

mono-spaced bold. For example:

File-related classes includefilesystemfor file systems,filefor files, anddirfor directories. Each class has its own associated set of permissions.

Choose → → from the main menu bar to launch Mouse Preferences. In the Buttons tab, click the Left-handed mouse check box and click to switch the primary mouse button from the left to the right (making the mouse suitable for use in the left hand).To insert a special character into a gedit file, choose → → from the main menu bar. Next, choose → from the Character Map menu bar, type the name of the character in the Search field and click . The character you sought will be highlighted in the Character Table. Double-click this highlighted character to place it in the Text to copy field and then click the button. Now switch back to your document and choose → from the gedit menu bar.

Mono-spaced Bold ItalicProportional Bold Italic

To connect to a remote machine using ssh, typesshat a shell prompt. If the remote machine isusername@domain.nameexample.comand your username on that machine is john, typessh john@example.com.Themount -o remountcommand remounts the named file system. For example, to remount thefile-system/homefile system, the command ismount -o remount /home.To see the version of a currently installed package, use therpm -qcommand. It will return a result as follows:package.package-version-release

Publican is a DocBook publishing system.

mono-spaced roman and presented thus:

books Desktop documentation drafts mss photos stuff svn books_tests Desktop1 downloads images notes scripts svgs

mono-spaced roman but add syntax highlighting as follows:

package org.jboss.book.jca.ex1; import javax.naming.InitialContext; public class ExClient { public static void main(String args[]) throws Exception { InitialContext iniCtx = new InitialContext(); Object ref = iniCtx.lookup("EchoBean"); EchoHome home = (EchoHome) ref; Echo echo = home.create(); System.out.println("Created Echo"); System.out.println("Echo.echo('Hello') = " + echo.echo("Hello")); } }

13.

Table of Contents

yum check-update command to see which installed packages on your system have updates available.

yum to install, update or remove packages on your system. All examples in this chapter assume that you have already obtained superuser privileges by using either the su or sudo command.

~]# yum check-update

Loaded plugins: presto, refresh-packagekit, security

PackageKit.x86_64 0.5.3-0.1.20090915git.fc12 fedora

PackageKit-glib.x86_64 0.5.3-0.1.20090915git.fc12 fedora

PackageKit-yum.x86_64 0.5.3-0.1.20090915git.fc12 fedora

PackageKit-yum-plugin.x86_64 0.5.3-0.1.20090915git.fc12 fedora

glibc.x86_64 2.10.90-22 fedora

glibc-common.x86_64 2.10.90-22 fedora

kernel.x86_64 2.6.31-14.fc12 fedora

kernel-firmware.noarch 2.6.31-14.fc12 fedora

rpm.x86_64 4.7.1-5.fc12 fedora

rpm-libs.x86_64 4.7.1-5.fc12 fedora

rpm-python.x86_64 4.7.1-5.fc12 fedora

yum.noarch 3.2.24-4.fc12 fedora

PackageKit — the name of the package

x86_64 — the CPU architecture the package was built for

0.5.3-0.1.20090915git.fc12 — the version of the updated package to be installed

fedora — the repository in which the updated package is located

yum and rpm packages), as well as their dependencies (such as the kernel-firmware, rpm-libs and rpm-python packages), all using yum.

yum update <package_name>:

~]# yum update glibc

Loaded plugins: presto, refresh-packagekit, security

Setting up Install Process

Resolving Dependencies

--> Running transaction check

--> Processing Dependency: glibc = 2.10.90-21 for package: glibc-common-2.10.90-21.x86_64

---> Package glibc.x86_64 0:2.10.90-22 set to be updated

--> Running transaction check

---> Package glibc-common.x86_64 0:2.10.90-22 set to be updated

--> Finished Dependency Resolution

Dependencies Resolved

======================================================================

Package Arch Version Repository Size

======================================================================

Updating:

glibc x86_64 2.10.90-22 fedora 2.7 M

Updating for dependencies:

glibc-common x86_64 2.10.90-22 fedora 6.0 M

Transaction Summary

======================================================================

Install 0 Package(s)

Upgrade 2 Package(s)

Total download size: 8.7 M

Is this ok [y/N]:

Loaded plugins: presto, refresh-packagekit, security — yum always informs you which Yum plugins are installed and enabled. Here, yum is using the presto, refresh-packagekit and security plugins. Refer to Section 1.4, “Yum Plugins” for general information on Yum plugins, or to Section 1.4.3, “Plugin Descriptions” for descriptions of specific plugins.

kernel.x86_64 — you can download and install new kernels safely with yum.

yum always installs a new kernel in the same sense that RPM installs a new kernel when you use the command rpm -i kernel. Therefore, you do not need to worry about the distinction between installing and upgrading a kernel package when you use yum: it will do the right thing, regardless of whether you are using the yum update or yum install command.

rpm -i kernel command (which installs a new kernel) instead of rpm -u kernel (which replaces the current kernel). Refer to Section 3.2.2, “Installing” for more information on installing/updating kernels with RPM.

yum presents the update information and then prompts you as to whether you want it to perform the update; yum runs interactively by default. If you already know which transactions yum plans to perform, you can use the -y option to automatically answer yes to any questions yum may ask (in which case it runs non-interactively). However, you should always examine which changes yum plans to make to the system so that you can easily troubleshoot any problems that might arise.

cat /var/log/yum.log at the shell prompt. The most recent transactions are listed at the end of the log file.

yum update (without any arguments):

~]# yum update

yum command with a set of highly-useful security-centric commands, subcommands and options. Refer to Section 1.4.3, “security (yum-plugin-security)” for specific information.

yum search <term> [more_terms ] command. yum displays the list of matches for each term:

~]# yum search meld kompare

Loaded plugins: presto, refresh-packagekit, security

=============================== Matched: kompare ===============================

kdesdk.x86_64 : The KDE Software Development Kit (SDK)

komparator.x86_64 : Kompare and merge two folders

================================ Matched: meld =================================

meld.noarch : Visual diff and merge tool

python-meld3.x86_64 : An HTML/XML templating system for Python

yum search is useful for searching for packages you do not know the name of, but for which you know a related term.

yum list and related commands provide information about packages, package groups, and repositories.

* (which expands to match any character multiple times) and ? (which expands to match any one character). Be careful to escape both of these glob characters when passing them as arguments to a yum command. If you do not, the bash shell will interpret the glob expressions as pathname expansions, and potentially pass all files in the current directory that match the globs to yum, which is not what you want. Instead, you want to pass the glob expressions themselves to yum, which you can do by either:

~]# yum list available gimp\*plugin\*

Loaded plugins: presto, refresh-packagekit, security

Available Packages

gimp-fourier-plugin.x86_64 0.3.2-3.fc11 fedora

gimp-lqr-plugin.x86_64 0.6.1-2.fc11 updates

~]# yum list installed "krb?-*"

Loaded plugins: presto, refresh-packagekit, security

Installed Packages

krb5-auth-dialog.x86_64 0.12-2.fc12 @fedora

krb5-libs.x86_64 1.7-8.fc12 @fedora

krb5-workstation.x86_64 1.7-8.fc12 @fedora

yum list <glob_expr> [more_glob_exprs ] — List information on installed and available packages matching all glob expressions.

~]# yum list abrt-addon\* abrt-plugin\*

Loaded plugins: presto, refresh-packagekit, security

Installed Packages

abrt-addon-ccpp.x86_64 0.0.9-2.fc12 @fedora

abrt-addon-kerneloops.x86_64 0.0.9-2.fc12 @fedora

abrt-addon-python.x86_64 0.0.9-2.fc12 @fedora

abrt-plugin-bugzilla.x86_64 0.0.9-2.fc12 @fedora

abrt-plugin-kerneloopsreporter.x86_64 0.0.9-2.fc12 @fedora

abrt-plugin-sqlite3.x86_64 0.0.9-2.fc12 @fedora

Available Packages

abrt-plugin-filetransfer.x86_64 0.0.9-2.fc12 fedora

abrt-plugin-logger.x86_64 0.0.9-2.fc12 fedora

abrt-plugin-mailx.x86_64 0.0.9-2.fc12 fedora

abrt-plugin-runapp.x86_64 0.0.9-2.fc12 fedora

abrt-plugin-sosreport.x86_64 0.0.9-2.fc12 fedora

abrt-plugin-ticketuploader.x86_64 0.0.9-2.fc12 fedora

yum list all — List all installed and available packages.

yum list installed — List all packages installed on your system. The rightmost column in the output lists the repository from which the package was retrieved.

yum list available — List all available packages in all enabled repositories.

yum grouplist — List all package groups.

yum repolist — List the repository ID, name, and number of packages it provides for each enabled repository.

yum info <package_name> [more_names ] displays information about one or more packages (glob expressions are valid here as well):

~]# yum info abrt

Loaded plugins: presto, refresh-packagekit, security

Installed Packages

Name : abrt

Arch : x86_64

Version : 0.0.9

Release : 2.fc12

Size : 525 k

Repo : installed

From repo : fedora

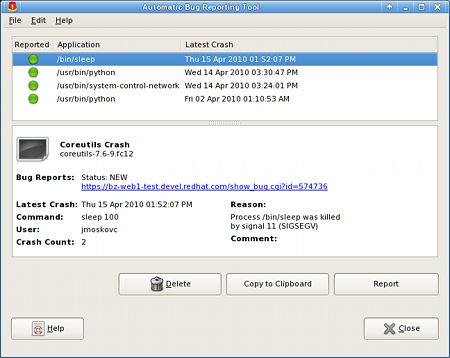

Summary : Automatic bug detection and reporting tool

URL : https://fedorahosted.org/abrt/

License : GPLv2+

Description: abrt is a tool to help users to detect defects in applications and

: to create bug reports that include all information required by the

: maintainer to hopefully resolve it. It uses a plugin system to extend

: its functionality.

yum info <package_name> is similar to the rpm -q --info <package_name> command, but provides as additional information the ID of the Yum repository the RPM package is found in (look for the From repo: line in the output).

yumdb info <package_name> [more_names ] can be used to query the Yum database for alternative and useful information about a package, including the checksum of the package (and algorithm used to produce it, such as SHA-256), the command given on the command line that was invoked to install the package (if any), and the reason that the package is installed on the system (where user indicates it was installed by the user, and dep means it was brought in as a dependency):

~]# yumdb info yum

yum-3.2.27-3.fc12.noarch

checksum_data = 8d7773ec28c954c69c053ea4bf61dec9fdea11a59c50a2c31d1aa2e24bc611d9

checksum_type = sha256

command_line = update

from_repo = updates

from_repo_revision = 1272392716

from_repo_timestamp = 1272414297

reason = user

releasever = 12

man yumdb for more information on the yumdb command.

yum history command, which is new in Fedora 13, can be used to show a timeline of Yum transactions, the dates and times on when they occurred, the number of packages affected, whether transactions succeeded or were aborted, and if the RPM database was changed between transactions. Refer to the history section of man yum for details.

~]# yum install <package_name>

yum install <package_name> [more_names] .

.arch to the package name:

~]# yum install sqlite2.i586

~]# yum install audacious-plugins-\*

yum install. If you know the name of the binary you want to install, but not its package name, you can give yum install the path name:

~]# yum install /usr/sbin/named

yum then searches through its package lists, finds the package which provides /usr/sbin/named, if any, and prompts you as to whether you want to install it.

named binary, but don't know in which bin or sbin directory that file lives? In that situation, you can give yum provides a glob expression:

~]#yum provides "*bin/named"Loaded plugins: presto, refresh-packagekit, security 32:bind-9.6.1-0.3.b1.fc11.x86_64 : The Berkeley Internet Name Domain (BIND) DNS (Domain Name System) server Repo : fedora Matched from: Filename : /usr/sbin/named ~]#yum install bind

yum provides is the same as yum whatprovides.

yum provides "*/<file_name>" is a common and useful trick to quickly find the package(s) that contain <file_name>.

yum grouplist -v command lists the names of all package groups, and, next to each of them, their groupid in parentheses. The groupid is always the term in the last pair of parentheses, such as kde-desktop and kde-software-development in this example:

~]# yum -v grouplist kde\*

KDE (K Desktop Environment) (kde-desktop)

KDE Software Development (kde-software-development)

groupinstall:

~]# yum groupinstall "KDE (K Desktop Environment)"

~]# yum groupinstall kde-desktop

install command if you prepend it with an @-symbol (which tells yum that you want to perform a groupinstall):

~]# yum install @kde-desktop

yum remove <package_name> uninstalls (removes in RPM and Yum terminology) the package, as well as any packages that depend on it. As when you install multiple packages, you can remove several at once by adding more package names to the command:

~]# yum remove foo bar baz

install command, remove can take, as arguments, package names, glob expressions, file lists or package provides.

install syntax.

~]#yum groupremove "KDE (K Desktop Environment)"~]#yum groupremove kde-desktop~]#yum remove @kde-desktop

yum to remove only those packages which are not required by any other packages or groups by adding the groupremove_leaf_only=1 directive to the [main] section of the /etc/yum.conf configuration file. For more information on this directive, refer to Section 1.3.1, “Setting [main] Options”.

[main] section of the /etc/yum.conf configuration file;

repository] sections in /etc/yum.conf and .repo files in the /etc/yum.repos.d/ directory;

/etc/yum.conf and files in /etc/yum.repos.d/so that dynamic version and architecture values are handled correctly; and,

/etc/yum.conf configuration file contains one mandatory [main] section under which you can set Yum options. The values that you define in the [main] section of yum.conf have global effect, and may override values set any individual [repository] sections. You can also add [repository] sections to /etc/yum.conf; however, best practice is to define individual repositories in new or existing .repo files in the /etc/yum.repos.d/directory. Refer to Section 1.3.2, “Setting [repository] Options” if you need to add or edit repository-specific information.

/etc/yum.conf configuration file contains exactly one [main] section. You can add many additional options under the [main] section heading in /etc/yum.conf. Some of the key-value pairs in the [main] section affect how yum operates; others affect how Yum treats repositories. The best source of information for all Yum options is in the [main] OPTIONS and [repository] OPTIONS sections of man yum.conf.

/etc/yum.conf configuration file:

[main]

cachedir=/var/cache/yum/$basearch/$releasever

keepcache=0

debuglevel=2

logfile=/var/log/yum.log

exactarch=1

obsoletes=1

gpgcheck=1

plugins=1

installonly_limit=3

[comments abridged]

# PUT YOUR REPOS HERE OR IN separate files named file.repo

# in /etc/yum.repos.d

[main] section, and descriptions for each:

cachedir=/var/cache/yum/$basearch/$releasever cachedir=/var/cache/yum/$basearch/$releasever . See Section 1.3.3, “Using Yum Variables” for descriptions of the $basearch and $releasever Yum variables.

keepcache=1 instructs yum to keep the cache of headers and packages after a successful installation. keepcache=1 is the default.

.repo files are located. .repo files contain repository information (similar to the [repository] section(s) of /etc/yum.conf). yum collects all repository information from .repo files and the [repository] section of the /etc/yum.conf file to create a master list of repositories to use for transactions. Refer to Section 1.3.2, “Setting [repository] Options” for more information about options you can use for both the [repository] section and .repo files. If reposdir is not set, yum uses the default directory /etc/yum.repos.d/.

gpgcheck=0, which disables GPG-checking. If this option is set in the [main] section of the /etc/yum.conf file, it sets the GPG-checking rule for all repositories. However, you can also set this on individual repositories instead; i.e., you can enable GPG-checking on one repository while disabling it on another. Setting gpgcheck= for individual repositories overrides the default if it is present in /etc/yum.conf. Refer to Section 3.3, “Checking a Package's Signature” for further information on GPG signature-checking.

yum should prompt for confirmation of critical actions. The default is assumeyes=0, which means yum will prompt you for confirmation. If assumeyes=1 is set, yum behaves in the same way that the command line option -y does.

more_names]" * and ?) are allowed.

yum should attempt to retrieve a file before returning an error. Setting this to 0 makes yum retry forever. The default value is 6.

1 causes yum to check the dependencies of each package when removing a package group, and to remove only those packages which are not not required by any other package or group. The default value for this directive is 0, which means that removing a package group will remove all packages in that group regardless of whether they are required by other packages or groups. For more information on removing packages, refer to Smart package group removal.

repository] sections (where repository is a unique repository ID, such as [my_personal_repo]) to /etc/yum.conf or to .repo files in the /etc/yum.repos.d/directory. All .repo files in /etc/yum.repos.d/are read by yum; best practice is to define your repositories here instead of in /etc/yum.conf. You can create new, custom .repo files in this directory, add [repository] sections to those files, and the next time you run a yum command, it will take all newly-added repositories into account.

.repo file should take:

[repository_ID] name=A Repository Name baseurl=http://path/to/repo or ftp://path/to/repo or file://path/to/local/repo

repository] section must contain the following minimum parts:

baseurl=http://path/to/repo/releases/$releasever/server/$basearch/os/

baseurl= line by prepending it as username:password@link. For example, if a repository on http://www.example.com/repo/ requires a username of "user" and a password of "password", then the baseurl link can be specified as baseurl=http://user:password@www.example.com/repo/

repository] options:

enabled=0 instructs yum not to include that repository as a package source when performing updates and installs. This is an easy way of quickly turning repositories on and off, which is useful when you desire a single package from a repository that you do not want to enable for updates, etc. Turning repositories on and off can also be performed quickly by passing either the --enablerepo=<repo_name> or --disablerepo=<repo_name> option to yum, or easily through PackageKit's Add/Remove Software window. For the latter, refer to Section 2.2.1, “Refreshing Software Sources (Yum Repositories)”.

repository] options exist. Refer to the [repository] OPTIONS section of man yum.conf for the exhaustive list.

yum commands and in all Yum configuration files (/etc/yum.conf and all .repo files in /etc/yum.repos.d/.

$releasever $releasever from the distroverpkg=<value> line in the /etc/yum.conf configuration file. If there is no such line in /etc/yum.conf, then yum infers the correct value by deriving the version number from the redhat-release package.

$arch os.uname() function. Valid values for $arch include: i586, i686 and x86_64.

$basearch $basearch to reference the base architecture of the system. For example, i686 and i586 machines both have a base architecture of i386, and AMD64 and Intel64 machines have a base architecture of x86_64.

$YUM0-9 /etc/yum.conf for example) and a shell environment variable with the same name does not exist, then the configuration file variable is not replaced.

createrepo package:

~]# yum install createrepo

/mnt/local_repo/.

createrepo --database command on that directory:

~]# createrepo --database /mnt/local_repo

yum operations.

yum command:

~]# yum info yum

Loaded plugins: presto, refresh-packagekit, security

[output truncated]

Loaded plugins are the names you can provide to the --disableplugins=<plugin_name> option.

plugins= is present in the [main] section of /etc/yum.conf, and that its value is set to 1:

plugins=1

plugins=0.

/etc/yum/pluginconf.d/ directory. You can set plugin-specific options in these files. For example, here is the security plugin's security.conf configuration file:

[main] enabled=1

[main] section (similar to Yum's /etc/yum.conf file) in which there is (or you can place if it is missing) an enabled= option that controls whether the plugin is enabled when you run yum commands.

enabled=0 in /etc/yum.conf, then all plugins are disabled regardless of whether they are enabled in their individual configuration files.

yum command, use the --noplugins option.

yum command, then you can add the --disableplugin=<plugin_name> option to the command:

~]# yum update --disableplugin=presto

--disableplugin= option are the same names listed after the Loaded plugins: line in the output of any yum command. You can disable multiple plugins by separating their names with commas. In addition, you can match multiple similarly-named plugin names or simply shorten long ones by using glob expressions: --disableplugin=presto,refresh-pack*.

yum-plugin-<plugin_name> package-naming convention, but not always: the package which provides the presto plugin is named yum-presto, for example. You can install a Yum plugin in the same way you install other packages:

~]# yum install yum-plugin-security

yum package and all packages it depends on from being purposefully or accidentally removed. This simple scheme prevents many of the most important packages necessary for your system to run from being removed. In addition, you can list more packages, one per line, in the /etc/sysconfig/protected-packages file[1] (which you should create if it does not exist), and protect-packages will extend protection-from-removal to those packages as well. To temporarily override package protection, use the --override-protection option with an applicable yum command.

yum with a set of highly-useful security-related commands, subcommands and options.

~]# yum check-update --security

Loaded plugins: presto, refresh-packagekit, security

Limiting package lists to security relevant ones

Needed 3 of 7 packages, for security

elinks.x86_64 0.12-0.13.pre3.fc11 fedora

kernel.x86_64 2.6.30.8-64.fc11 fedora

kernel-headers.x86_64 2.6.30.8-64.fc11 fedora

yum update --security or yum update-minimal --security to update those packages which are affected by security advisories. Both of these commands update all packages on the system for which a security advisiory has been issued. yum update-minimal --security updates them to the latest packages which were released as part of a security advisory, while yum update --security will update all packages affected by a security advisory to the latest version of that package available.

yum update-minimal --security will update you to kernel-2.6.30.8-32, and yum update --security will update you to kernel-2.6.30.8-64. Conservative system administrators may want to use update-minimal to reduce the risk incurred by updating packages as much as possible.

man yum-security for usage details and further explanation of the enhancements the security plugin adds to yum.

Yum Guides section of the wiki contains more Yum documentation.

[1]

You can also place files with the extension .list in the /etc/sysconfig/protected-packages.d/ directory (which you should create if it does not exist), and list packages—one per line—in these files. protect-packages will protect these too.

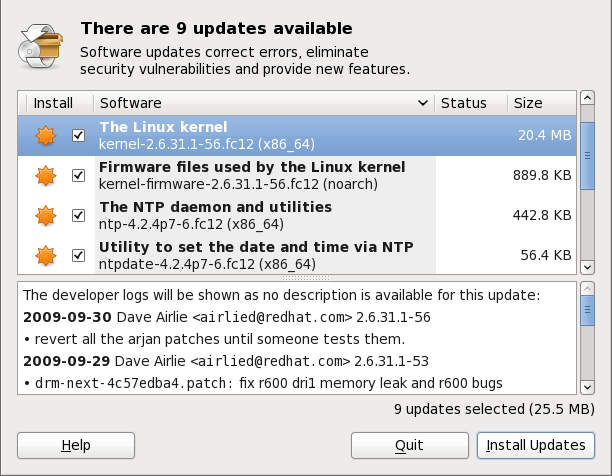

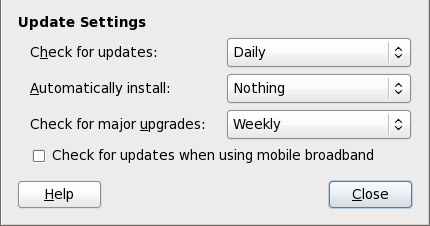

gpk-update-viewer command at the shell prompt. In the Software Updates window, all available updates are listed along with the names of the packages being updated (minus the .rpm suffix, but including the CPU architecture), a short summary of the package, and, usually, short descriptions of the changes the update provides. Any updates you do not wish to install can be de-selected here by unchecking the checkbox corresponding to the update.

kernel package, then it will prompt you after installation, asking you whether you want to reboot the system and thereby boot into the newly-installed kernel.

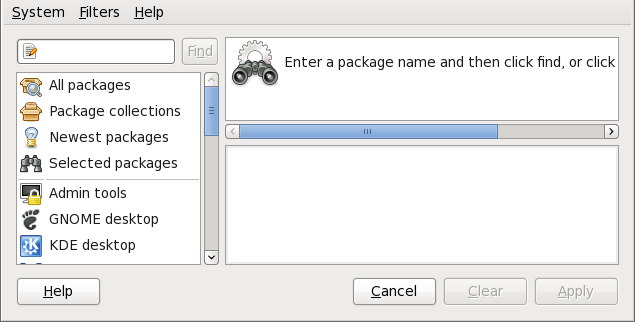

gpk-application command at the shell prompt.

name=<My Repository Name> field of all [repository] sections in the /etc/yum.conf configuration file, and in all repository.repo/etc/yum.repos.d/ directory.

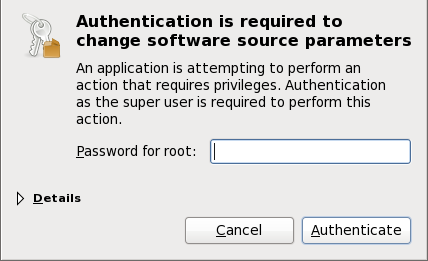

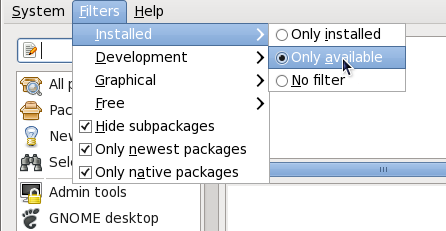

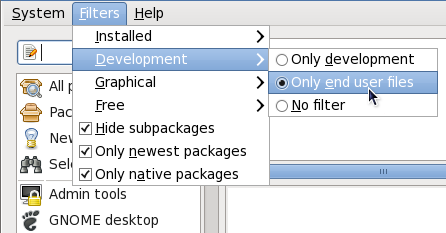

enabled=<1 or 0> field in [repository] sections. Checking an unchecked box enables the Yum repository, and unchecking it disables it. Performing either function causes PolicyKit to prompt for superuser authentication to enable or disable the repository. PackageKit actually inserts the enabled=<1 or 0> line into the correct [repository] section if it does not exist, or changes the value if it does. This means that enabling or disabling a repository through the Software Sources window causes that change to persist after closing the window or rebooting the system. The ability to quickly enable and disable repositories based on our needs is a highly-convenient feature of PackageKit.

<package_name>-devel

<package> <package>-devel

<package>-libs

<package>-libs-devel

<package>-debuginfo

crontabs-1.10-31.fc12.noarch.rpm) are never filtered out by checking . This filter has no affect on non-multilib systems, such as x86 machines.

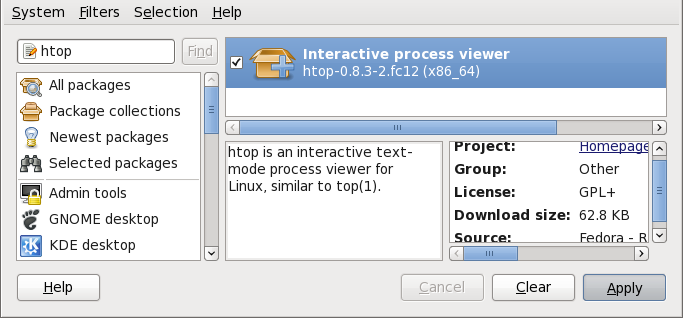

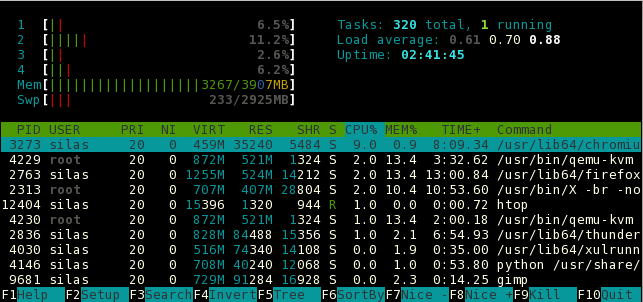

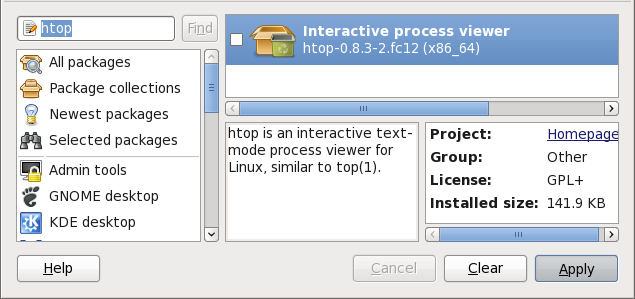

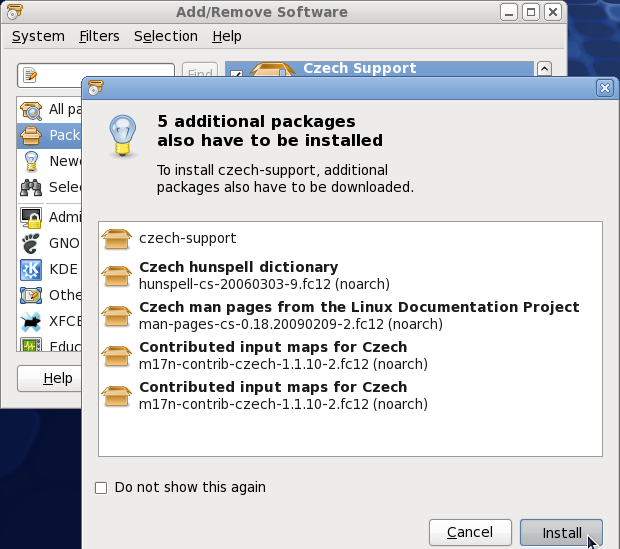

htop, an colorful and enhanced version of the top process viewer, by opening a shell prompt and entering:

~]$ htop

top is good enough for us and we want to uninstall it. Remembering that we need to change the filter we recently used to install it to in → , we search for htop again and uncheck it. The program did not install any dependencies of its own; if it had, those would be automatically removed as well, as long as they were not also dependencies of any other packages still installed on our system.

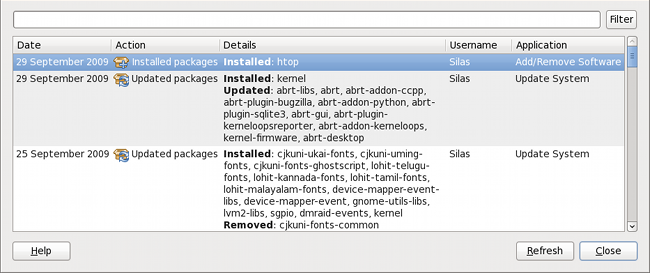

gpk-log command at the shell prompt.

Updated System or Installed Packages, the Date on which that action was performed, the Username of the user who performed the action, and the front end Application the user used (such as Update Icon, or kpackagekit). The Details column provides the types of the transactions, such as Updated, Installed or Removed, as well as the list of packages the transactions were performed on.

packagekitd daemon back end, which communicates with a package manager-specific back end that utilizes Yum (on Fedora) to perform the actual transactions, such as installing and removing packages, etc.

| Window Title | Function | How to Open | Shell Command |

|---|---|---|---|

| Add/Remove Software | Install, remove or view package info |

From the GNOME panel: → →

| gpk-application |

| Software Update | Perform package updates |

From the GNOME panel: → →

| gpk-update-viewer |

| Software Sources | Enable and disable Yum repositories |

From Add/Remove Software: →

| gpk-repo |

| Software Log Viewer | View the transaction log |

From Add/Remove Software: →

| gpk-log |

| Software Update Preferences | Set PackageKit preferences | gpk-prefs | |

| (Notification Area Alert) | Alerts you when updates are available |

From the GNOME panel: → → , Application Autostart tab

| gpk-update-icon |

packagekitd daemon runs outside the user session and communicates with the various graphical front ends. The packagekitd daemon[2] communicates via the DBus system message bus with another back end, which utilizes Yum's Python API to perform queries and make changes to the sytem. On Linux systems other than Red Hat and Fedora, packagekitd can communicate with other back ends that are able to utilize the native package manager for that system. This modular architecture provides the abstraction necessary for the graphical interfaces to work with many different package managers to perform essentially the same types of package management tasks. Learning how to use the PackageKit front ends means that you can use the same familiar graphical interface across many different Linux distributions, even when they utilize a native package manager other than Yum.

packagekitd daemon, which runs outside of the user session.

gnome-packagekit package instead of by PackageKit and its dependencies. Users working in a KDE environment may prefer to install the kpackagekit package, which provides a KDE interface for PackageKit.

pkcon.

[2]

System daemons are typically long-running processes that provide services to the user or to other programs, and which are started, often at boot time, by special initialization scripts (often shortened to init scripts). Daemons respond to the service command and can be turned on or off permanently by using the chkconfig on or chkconfig offcommands. They can typically be recognized by a “d ” appended to their name, such as the packagekitd daemon. Refer to Chapter 6, Controlling Access to Services for information about system services.

x86_64.rpm.

.tar.gz files.

rpm --help or man rpm. You can also refer to Section 3.5, “Additional Resources” for more information on RPM.

tree-1.5.2.2-4.fc13.x86_64.rpm. The file name includes the package name (tree), version (1.5.2.2), release (4), operating system major version (fc13) and CPU architecture (x86_64). Assuming the tree-1.5.2.2-4.fc13.x86_64.rpm package is in the current directory, log in as root and type the following command at a shell prompt to install it:

rpm -ivh tree-1.5.2.2-4.fc13.x86_64.rpm

-i option tells rpm to install the package, and the -v and -h options (which are combined with -i) cause rpm to print more verbose output and display a progress meter using hash marks.

-U option, which upgrades the package if an older version is already installed, or simply installs it if not:

rpm -Uvh tree-1.5.2.2-4.fc13.x86_64.rpm

Preparing... ########################################### [100%] 1:tree ########################################### [100%]

error: tree-1.5.2.2-4.fc13.x86_64.rpm: Header V3 RSA/SHA256 signature: BAD, key ID d22e77f2

error: tree-1.5.2.2-4.fc13.x86_64.rpm: Header V3 RSA/SHA256 signature: BAD, key ID d22e77f2

NOKEY:

warning: tree-1.5.2.2-4.fc13.x86_64.rpm: Header V3 RSA/SHA1 signature: NOKEY, key ID 57bbccba

rpm -ivh command (simple install) instead of rpm -Uvh. The reason for this is that install (-i) and upgrade (-U) take on specific meanings when installing kernel packages. Refer to Chapter 28, Manually Upgrading the Kernel for details.

Preparing... ########################################### [100%] package tree-1.5.2.2-4.fc13.x86_64 is already installed

--replacepkgs option, which tells RPM to ignore the error:

rpm -ivh --replacepkgs tree-1.5.2.2-4.fc13.x86_64.rpm

Preparing... ################################################## file /usr/bin/foobar from install of foo-1.0-1.fc13 conflicts with file from package bar-3.1.1.fc13

--replacefiles option:

rpm -ivh --replacefiles foo-1.0-1.fc13.x86_64.rpm

error: Failed dependencies:

bar.so.3()(64bit) is needed by foo-1.0-1.fc13.x86_64

Suggested resolutions:

bar-3.1.1.fc13.x86_64.rpm

rpm -ivh foo-1.0-1.fc13.x86_64.rpm bar-3.1.1.fc13.x86_64.rpm

Preparing... ########################################### [100%] 1:foo ########################################### [ 50%] 2:bar ########################################### [100%]

--whatprovides option to determine which package contains the required file.

rpm -q --whatprovides "bar.so.3"

bar.so.3 is in the RPM database, the name of the package is displayed:

bar-3.1.1.fc13.i586.rpm

rpm to install a package that gives us a Failed dependencies error (using the --nodeps option), this is not recommended, and will usually result in the installed package failing to run. Installing or removing packages with rpm --nodeps can cause applications to misbehave and/or crash, and can cause serious package management problems or, possibly, system failure. For these reasons, it is best to heed such warnings; the package manager—whether RPM, Yum or PackageKit—shows us these warnings and suggests possible fixes because accounting for dependencies is critical. The Yum package manager can perform dependency resolution and fetch dependencies from online repositories, making it safer, easier and smarter than forcing rpm to carry out actions without regard to resolving dependencies.

rpm -e foo

foo, not the name of the original package file, foo-1.0-1.fc13.x86_64. If you attempt to uninstall a package using the rpm -e command and the original full file name, you will receive a package name error.

rpm -e ghostscript

error: Failed dependencies:

libgs.so.8()(64bit) is needed by (installed) libspectre-0.2.2-3.fc13.x86_64

libgs.so.8()(64bit) is needed by (installed) foomatic-4.0.3-1.fc13.x86_64

libijs-0.35.so()(64bit) is needed by (installed) gutenprint-5.2.4-5.fc13.x86_64

ghostscript is needed by (installed) printer-filters-1.1-4.fc13.noarch

<library_name>.so.<number> ~]# rpm -q --whatprovides "libgs.so.8()(64bit)"

ghostscript-8.70-1.fc13.x86_64

rpm to remove a package that gives us a Failed dependencies error (using the --nodeps option), this is not recommended, and may cause harm to other installed applications. Installing or removing packages with rpm --nodeps can cause applications to misbehave and/or crash, and can cause serious package management problems or, possibly, system failure. For these reasons, it is best to heed such warnings; the package manager—whether RPM, Yum or PackageKit—shows us these warnings and suggests possible fixes because accounting for dependencies is critical. The Yum package manager can perform dependency resolution and fetch dependencies from online repositories, making it safer, easier and smarter than forcing rpm to carry out actions without regard to resolving dependencies.

-U option) is similar to installing one (the -i option). If we have the RPM named tree-1.5.3.0-1.fc13.x86_64.rpm in our current directory, and tree-1.5.2.2-4.fc13.x86_64.rpm is already installed on our system (rpm -qi will tell us which version of the tree package we have installed on our system, if any), then the following command will upgrade tree to the newer version:

rpm -Uvh tree-1.5.3.0-1.fc13.x86_64.rpm

foo package. Note that -U will also install a package even when there are no previous versions of the package installed.

-U option for installing kernel packages because RPM completely replaces the previous kernel package. This does not affect a running system, but if the new kernel is unable to boot during your next restart, there would be no other kernel to boot instead.

-i option adds the kernel to your GRUB boot menu (/etc/grub.conf). Similarly, removing an old, unneeded kernel removes the kernel from GRUB.

saving /etc/foo.conf as /etc/foo.conf.rpmsave

foo.conf.rpmnew, and leave the configuration file you modified untouched. You should still resolve any conflicts between your modified configuration file and the new one, usually by merging changes from the old one to the new one with a diff program.

package foo-2.0-1.fc13.x86_64.rpm (which is newer than foo-1.0-1) is already installed

--oldpackage option:

rpm -Uvh --oldpackage foo-1.0-1.fc13.x86_64.rpm

rpm -Fvh foo-2.0-1.fc13.x86_64.rpm

*.rpm glob:

rpm -Fvh *.rpm

/var/lib/rpm/, and is used to query what packages are installed, what versions each package is, and to calculate any changes to any files in the package since installation, among other use cases.

-q option. The rpm -q package name command displays the package name, version, and release number of the installed package <package_name>. For example, using rpm -q tree to query installed package tree might generate the following output:

tree-1.5.2.2-4.fc13.x86_64

man rpm for details) to further refine or qualify your query:

-a — queries all currently installed packages.

-f <file_name> — queries the RPM database for which package owns <file_name> rpm -qf /bin/ls instead of rpm -qf ls).

-p <package_file> — queries the uninstalled package <package_file> -i displays package information including name, description, release, size, build date, install date, vendor, and other miscellaneous information.

-l displays the list of files that the package contains.

-s displays the state of all the files in the package.

-d displays a list of files marked as documentation (man pages, info pages, READMEs, etc.) in the package.

-c displays a list of files marked as configuration files. These are the files you edit after installation to adapt and customize the package to your system (for example, sendmail.cf, passwd, inittab, etc.).

-v to the command to display the lists in a familiar ls -l format.

rpm -V verifies a package. You can use any of the Verify Options listed for querying to specify the packages you wish to verify. A simple use of verifying is rpm -V tree, which verifies that all the files in the tree package are as they were when they were originally installed. For example:

rpm -Vf /usr/bin/tree

/usr/bin/tree is the absolute path to the file used to query a package.

rpm -Va

rpm -Vp tree-1.5.2.2-4.fc13.x86_64.rpm

c" denotes a configuration file) and then the file name. Each of the eight characters denotes the result of a comparison of one attribute of the file to the value of that attribute recorded in the RPM database. A single period (.) means the test passed. The following characters denote specific discrepancies:

5 — MD5 checksum

S — file size

L — symbolic link

T — file modification time

D — device

U — user

G — group

M — mode (includes permissions and file type)

? — unreadable file (file permission errors, for example)

<rpm_file> is the file name of the RPM package):

rpm -K --nosignature <rpm_file>

<rpm_file>: rsa sha1 (md5) pgp md5 OK-K with -Kvv in the command.

x files as well.

/etc/pki/rpm-gpg/ directory. To verify a Fedora Project package, first import the correct key based on your processor architecture:

rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-fedora-x86_64

rpm -qa gpg-pubkey*

gpg-pubkey-57bbccba-4a6f97af

rpm -qi followed by the output from the previous command:

rpm -qi gpg-pubkey-57bbccba-4a6f97af

<rpm_file> with the filename of the RPM package):

rpm -K <rpm_file>

rsa sha1 (md5) pgp md5 OK. This means that the signature of the package has been verified, that it is not corrupt, and is therefore safe to install and use.

rpm -Va

rpm -qf /usr/bin/ghostscript

ghostscript-8.70-1.fc13.x86_64

/usr/bin/paste. You would like to verify the package that owns that program, but you do not know which package owns paste. Enter the following command,

rpm -Vf /usr/bin/paste

rpm -qdf /usr/bin/free

/usr/share/doc/procps-3.2.8/BUGS /usr/share/doc/procps-3.2.8/FAQ /usr/share/doc/procps-3.2.8/NEWS /usr/share/doc/procps-3.2.8/TODO /usr/share/man/man1/free.1.gz /usr/share/man/man1/pgrep.1.gz /usr/share/man/man1/pkill.1.gz /usr/share/man/man1/pmap.1.gz /usr/share/man/man1/ps.1.gz /usr/share/man/man1/pwdx.1.gz /usr/share/man/man1/skill.1.gz /usr/share/man/man1/slabtop.1.gz /usr/share/man/man1/snice.1.gz /usr/share/man/man1/tload.1.gz /usr/share/man/man1/top.1.gz /usr/share/man/man1/uptime.1.gz /usr/share/man/man1/w.1.gz /usr/share/man/man1/watch.1.gz /usr/share/man/man5/sysctl.conf.5.gz /usr/share/man/man8/sysctl.8.gz /usr/share/man/man8/vmstat.8.gz

rpm -qip crontabs-1.10-31.fc13.noarch.rpm

Name : crontabs Relocations: (not relocatable) Version : 1.10 Vendor: Fedora Project Release : 31.fc13 Build Date: Sat 25 Jul 2009 06:37:57 AM CEST Install Date: (not installed) Build Host: x86-6.fedora.phx.redhat.com Group : System Environment/Base Source RPM: crontabs-1.10-31.fc13.src.rpm Size : 2486 License: Public Domain and GPLv2 Signature : RSA/SHA1, Tue 11 Aug 2009 01:11:19 PM CEST, Key ID 9d1cc34857bbccba Packager : Fedora Project Summary : Root crontab files used to schedule the execution of programs Description : The crontabs package contains root crontab files and directories. You will need to install cron daemon to run the jobs from the crontabs. The cron daemon such as cronie or fcron checks the crontab files to see when particular commands are scheduled to be executed. If commands are scheduled, it executes them. Crontabs handles a basic system function, so it should be installed on your system.

crontabs RPM package installs. You would enter the following:

rpm -qlp crontabs-1.10-31.fc13.noarch.rpm

/etc/cron.daily /etc/cron.hourly /etc/cron.monthly /etc/cron.weekly /etc/crontab /usr/bin/run-parts /usr/share/man/man4/crontabs.4.gz

rpm --help — This command displays a quick reference of RPM parameters.

man rpm — The RPM man page gives more detail about RPM parameters than the rpm --help command.

Table of Contents

smb.conf Filehttpd httpd.conf /etc/openldap/schema/ Directory/etc/sysconfig/network-scripts/ directory. The scripts used to activate and deactivate these network interfaces are also located here. Although the number and type of interface files can differ from system to system, there are three categories of files that exist in this directory:

/etc/hosts 127.0.0.1) as localhost.localdomain. For more information, refer to the hosts man page.

/etc/resolv.conf resolv.conf man page.

/etc/sysconfig/network /etc/sysconfig/network ”.

/etc/sysconfig/network-scripts/ifcfg-<interface-name> /etc/sysconfig/networking/ directory is used by the Network Administration Tool (system-config-network) and its contents should not be edited manually. Using only one method for network configuration is strongly encouraged, due to the risk of configuration deletion.

ifcfg-<name> , where <name> refers to the name of the device that the configuration file controls.

ifcfg-eth0, which controls the first Ethernet network interface card or NIC in the system. In a system with multiple NICs, there are multiple ifcfg-eth<X> files (where <X> is a unique number corresponding to a specific interface). Because each device has its own configuration file, an administrator can control how each interface functions individually.

ifcfg-eth0 file for a system using a fixed IP address:

DEVICE=eth0 BOOTPROTO=none ONBOOT=yes NETMASK=255.255.255.0 IPADDR=10.0.1.27 USERCTL=no

ifcfg-eth0 file for an interface using DHCP looks different because IP information is provided by the DHCP server:

DEVICE=eth0 BOOTPROTO=dhcp ONBOOT=yes

system-config-network) is an easy way to make changes to the various network interface configuration files (refer to Chapter 5, Network Configuration for detailed instructions on using this tool).

BONDING_OPTS=<parameters> /etc/sysconfig/network-scripts/ifcfg-bond<N> (see Section 4.2.2, “Channel Bonding Interfaces”). These parameters are identical to those used for bonding devices in /sys/class/net/<bonding device>/bonding, and the module parameters for the bonding driver as described in bonding Module Directives.

BONDING_OPTS in ifcfg-<name> , do not use /etc/modprobe.conf to specify options for the bonding device.

BOOTPROTO=<protocol> <protocol> none — No boot-time protocol should be used.

bootp — The BOOTP protocol should be used.

dhcp — The DHCP protocol should be used.

BROADCAST=<address> <address> ifcalc.

DEVICE=<name> <name> DHCP_HOSTNAME DNS{1,2}=<address> <address> /etc/resolv.conf if the PEERDNS directive is set to yes.

ETHTOOL_OPTS=<options> <options> ethtool. For example, if you wanted to force 100Mb, full duplex:

ETHTOOL_OPTS="autoneg off speed 100 duplex full"

ETHTOOL_OPTS to set the interface speed and duplex settings. Custom initscripts run outside of the network init script lead to unpredictable results during a post-boot network service restart.

autoneg off option. This needs to be stated first, as the option entries are order-dependent.

GATEWAY=<address> <address> is the IP address of the network router or gateway device (if any).

HWADDR=<MAC-address> <MAC-address> is the hardware address of the Ethernet device in the form AA:BB:CC:DD:EE:FF. This directive must be used in machines containing more than one NIC to ensure that the interfaces are assigned the correct device names regardless of the configured load order for each NIC's module. This directive should not be used in conjunction with MACADDR.

IPADDR=<address> <address> MACADDR=<MAC-address> <MAC-address> is the hardware address of the Ethernet device in the form AA:BB:CC:DD:EE:FF. This directive is used to assign a MAC address to an interface, overriding the one assigned to the physical NIC. This directive should not be used in conjunction with HWADDR.

MASTER=<bond-interface> <bond-interface> SLAVE directive.

NETMASK=<mask> <mask> NETWORK=<address> <address> ifcalc.

ONBOOT=<answer> <answer> yes — This device should be activated at boot-time.

no — This device should not be activated at boot-time.

PEERDNS=<answer> <answer> yes — Modify /etc/resolv.conf if the DNS directive is set. If using DHCP, then yes is the default.

no — Do not modify /etc/resolv.conf.

SLAVE=<bond-interface> <bond-interface> yes — This device is controlled by the channel bonding interface specified in the MASTER directive.

no — This device is not controlled by the channel bonding interface specified in the MASTER directive.

MASTER directive.

SRCADDR=<address> <address> USERCTL=<answer> <answer> yes — Non-root users are allowed to control this device.

no — Non-root users are not allowed to control this device.

bonding kernel module and a special network interface called a channel bonding interface. Channel bonding enables two or more network interfaces to act as one, simultaneously increasing the bandwidth and providing redundancy.

/etc/sysconfig/network-scripts/ directory called ifcfg-bond<N> , replacing <N> with the number for the interface, such as 0.

DEVICE= directive must be bond<N> , replacing <N> with the number for the interface.

DEVICE=bond0

IPADDR=192.168.1.1

NETMASK=255.255.255.0

ONBOOT=yes

BOOTPROTO=none

USERCTL=no

BONDING_OPTS="<bonding parameters separated by spaces>"

MASTER= and SLAVE= directives to their configuration files. The configuration files for each of the channel-bonded interfaces can be nearly identical.

eth0 and eth1 may look like the following example:

DEVICE=eth<N>

BOOTPROTO=none

ONBOOT=yes

MASTER=bond0

SLAVE=yes

USERCTL=no

<N> with the numerical value for the interface.

/etc/modprobe.conf:

alias bond<N> bonding

<N> with the number of the interface, such as 0.

BONDING_OPTS="<bonding parameters>" directive in the ifcfg-bond<N> interface file. They should not be placed in /etc/modprobe.conf. For further instructions and advice on configuring the bonding module and to view the list of bonding parameters, refer to Section 29.5.2, “The Channel Bonding Module”.

ifcfg-<if-name>:<alias-value> naming scheme.

ifcfg-eth0:0 file could be configured to specify DEVICE=eth0:0 and a static IP address of 10.0.0.2, serving as an alias of an Ethernet interface already configured to receive its IP information via DHCP in ifcfg-eth0. Under this configuration, eth0 is bound to a dynamic IP address, but the same physical network card can receive requests via the fixed, 10.0.0.2 IP address.

ifcfg-<if-name>-<clone-name> . While an alias file allows multiple addresses for an existing interface, a clone file is used to specify additional options for an interface. For example, a standard DHCP Ethernet interface called eth0, may look similar to this:

DEVICE=eth0 ONBOOT=yes BOOTPROTO=dhcp

USERCTL directive is no if it is not specified, users cannot bring this interface up and down. To give users the ability to control the interface, create a clone by copying ifcfg-eth0 to ifcfg-eth0-user and add the following line to ifcfg-eth0-user:

USERCTL=yes

eth0 interface using the /sbin/ifup eth0-user command because the configuration options from ifcfg-eth0 and ifcfg-eth0-user are combined. While this is a very basic example, this method can be used with a variety of options and interfaces.

ifcfg-ppp<X> <X> is a unique number corresponding to a specific interface.

wvdial, the Network Administration Tool or Kppp is used to create a dialup account. It is also possible to create and edit this file manually.

ifcfg-ppp0 file:

DEVICE=ppp0 NAME=test WVDIALSECT=test MODEMPORT=/dev/modem LINESPEED=115200 PAPNAME=test USERCTL=true ONBOOT=no PERSIST=no DEFROUTE=yes PEERDNS=yes DEMAND=no IDLETIMEOUT=600

ifcfg-sl0.

DEFROUTE=<answer> <answer> yes — Set this interface as the default route.

no — Do not set this interface as the default route.

DEMAND=<answer> <answer> yes — This interface allows pppd to initiate a connection when someone attempts to use it.

no — A connection must be manually established for this interface.

IDLETIMEOUT=<value> <value> INITSTRING=<string> <string> LINESPEED=<value> <value> 57600, 38400, 19200, and 9600.

MODEMPORT=<device> <device> MTU=<value> <value> 576 results in fewer packets dropped and a slight improvement to the throughput for a connection.

NAME=<name> <name> PAPNAME=<name> <name> PERSIST=<answer> <answer> yes — This interface should be kept active at all times, even if deactivated after a modem hang up.

no — This interface should not be kept active at all times.

REMIP=<address> <address> WVDIALSECT=<name> <name> /etc/wvdial.conf. This file contains the phone number to be dialed and other important information for the interface.

ifcfg-lo /etc/sysconfig/network-scripts/ifcfg-lo, should never be edited manually. Doing so can prevent the system from operating correctly.

ifcfg-irlan0 ifcfg-plip0 /etc/sysconfig/network-scripts/ directory: /sbin/ifdown and /sbin/ifup.

ifup and ifdown interface scripts are symbolic links to scripts in the /sbin/ directory. When either of these scripts are called, they require the value of the interface to be specified, such as:

ifup eth0

ifup and ifdown interface scripts are the only scripts that the user should use to bring up and take down network interfaces.

/etc/rc.d/init.d/functions and /etc/sysconfig/network-scripts/network-functions. Refer to Section 4.5, “Network Function Files” for more information.

/etc/sysconfig/network-scripts/ directory:

ifup-aliases ifup-ippp and ifdown-ippp ifup-ipv6 and ifdown-ipv6 ifup-plip ifup-plusb ifup-post and ifdown-post ifup-ppp and ifdown-ppp ifup-routes ifdown-sit and ifup-sit ifup-wireless /etc/sysconfig/network-scripts/ directory can cause interface connections to act irregularly or fail. Only advanced users should modify scripts related to a network interface.

/sbin/service command on the network service (/etc/rc.d/init.d/network), as illustrated the following command:

/sbin/service network <action>

<action> can be either start, stop, or restart.

/sbin/service network status

route command to display the IP routing table.

/etc/sysconfig/network-scripts/route-interface file. For example, static routes for the eth0 interface would be stored in the /etc/sysconfig/network-scripts/route-eth0 file. The route-interface file has two formats: IP command arguments and network/netmask directives.

defaultX.X.X.Xdevinterface

X.X.X.X is the IP address of the default gateway. The interface is the interface that is connected to, or can reach, the default gateway.

X.X.X.X/XviaX.X.X.Xdevinterface

X.X.X.X/X is the network number and netmask for the static route. X.X.X.X and interface are the IP address and interface for the default gateway respectively. The X.X.X.X address does not have to be the default gateway IP address. In most cases, X.X.X.X will be an IP address in a different subnet, and interface will be the interface that is connected to, or can reach, that subnet. Add as many static routes as required.

route-eth0 file using the IP command arguments format. The default gateway is 192.168.0.1, interface eth0. The two static routes are for the 10.10.10.0/24 and 172.16.1.0/24 networks:

default 192.168.0.1 dev eth0 10.10.10.0/24 via 192.168.0.1 dev eth0 172.16.1.0/24 via 192.168.0.1 dev eth0

10.10.10.0/24 via 10.10.10.1 dev eth1

ifup command: "RTNETLINK answers: File exists" or 'Error: either "to" is a duplicate, or "X.X.X.X" is a garbage.', where X.X.X.X is the gateway, or a different IP address. These errors can also occur if you have another route to another network using the default gateway. Both of these errors are safe to ignore.

route-interface files. The following is a template for the network/netmask format, with instructions following afterwards:

ADDRESS0=X.X.X.XNETMASK0=X.X.X.XGATEWAY0=X.X.X.X

ADDRESS0=X.X.X.X is the network number for the static route.

NETMASK0=X.X.X.X is the netmask for the network number defined with ADDRESS0=X.X.X.X .

GATEWAY0=X.X.X.X is the default gateway, or an IP address that can be used to reach ADDRESS0=X.X.X.X

route-eth0 file using the network/netmask directives format. The default gateway is 192.168.0.1, interface eth0. The two static routes are for the 10.10.10.0/24 and 172.16.1.0/24 networks. However, as mentioned before, this example is not necessary as the 10.10.10.0/24 and 172.16.1.0/24 networks would use the default gateway anyway:

ADDRESS0=10.10.10.0 NETMASK0=255.255.255.0 GATEWAY0=192.168.0.1 ADDRESS1=172.16.1.0 NETMASK1=255.255.255.0 GATEWAY1=192.168.0.1

ADDRESS0, ADDRESS1, ADDRESS2, and so on.

ADDRESS0=10.10.10.0 NETMASK0=255.255.255.0 GATEWAY0=10.10.10.1

/etc/sysconfig/network-scripts/network-functions file contains the most commonly used IPv4 functions, which are useful to many interface control scripts. These functions include contacting running programs that have requested information about changes in the status of an interface, setting hostnames, finding a gateway device, verifying whether or not a particular device is down, and adding a default route.

/etc/sysconfig/network-scripts/network-functions-ipv6 file exists specifically to hold this information. The functions in this file configure and delete static IPv6 routes, create and remove tunnels, add and remove IPv6 addresses to an interface, and test for the existence of an IPv6 address on an interface.

/usr/share/doc/initscripts-<version>/sysconfig.txt /usr/share/doc/iproute-<version>/ip-cref.ps ip command, which can be used to manipulate routing tables, among other things. Use the ggv or kghostview application to view this file.

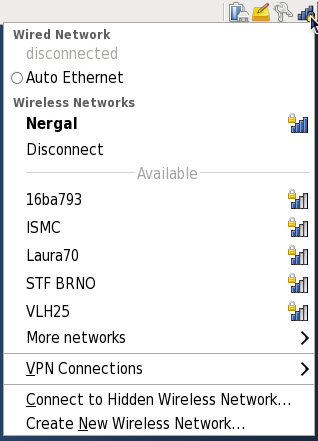

DSL and PPPoE (Point-to-Point over Ethernet). In addition, NetworkManager allows for the configuration of network aliases, static routes, DNS information and VPN connections, as well as many connection-specific parameters. Finally, NetworkManager provides a rich API via D-Bus which allows applications to query and control network configuration and state.

system-config-network after its command line invocation. In Fedora 13, NetworkManager replaces the former Network Administration Tool while providing enhanced functionality, such as user-specific and mobile broadband configuration. It is also possible to configure the network in Fedora 13 by editing interface configuration files; refer to Chapter 4, Network Interfaces for more information.

~]# yum install NetworkManager

~]# service NetworkManager status

NetworkManager (pid 1527) is running...

service command will report NetworkManager is stopped if the NetworkManager service is not running. To start it for the current session:

~]# service NetworkManager start

chkconfig command to ensure that NetworkManager starts up every time the system boots:

~]# chkconfig NetworkManager on

~]$ nm-applet &

~]$ nm-connection-editor &

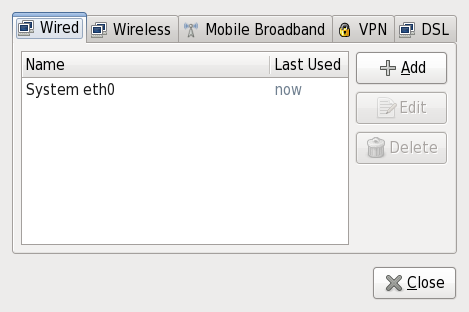

/etc/sysconfig/networking/ directory (mainly in ifcfg-<network_type> interface configuration files), user connection settings are stored in GConf and the GNOME keyring, and are available only during login sessions for the user who created them. Thus, logging out of the desktop session causes user-specific connections to become unavailable. Because of this property, users may wish to configure VPN connections as user connections for security purposes.

/etc/sysconfig/networking/ directory, and to delete the GConf settings from the user's session. Conversely, converting a system to a user-specific connection causes NetworkManager to remove the system-wide configuration files and create the corresponding GConf/GNOME keyring settings.

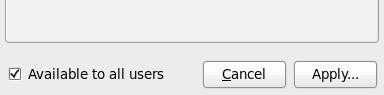

connection_name window and clicking Apply will make it a sysem connection. Unchecking that checkbox will make it a user connection. Depending on the aforementioned policy setting, NetworkManager may invoke PolicyKit to ensure you have the appropriate privileges, in which case you will be prompted for the root password.

| Wired | Wireless | Mobile Broadband | VPN | DSL | |

|---|---|---|---|---|---|

| Wired | Section 5.4.1, “Configuring the Wired Tab” | ||||

| 802.1x Security | Section 5.4.2, “Configuring the 802.1x Security Tab” | ||||

| Wireless | Section 5.4.3, “Configuring the Wireless Tab” | ||||

| Wireless Security | Section 5.4.4, “Configuring the Wireless Security Tab” | ||||

| Mobile Broadband | Section 5.4.5, “Configuring the Mobile Broadband Tab” | ||||

| PPP Settings | Section 5.4.6, “Configuring the PPP Settings Tab” | ||||

| VPN | Section 5.4.7, “Configuring the VPN Tab” | ||||

| DSL | Section 5.4.8, “Configuring the DSL Tab” | ||||

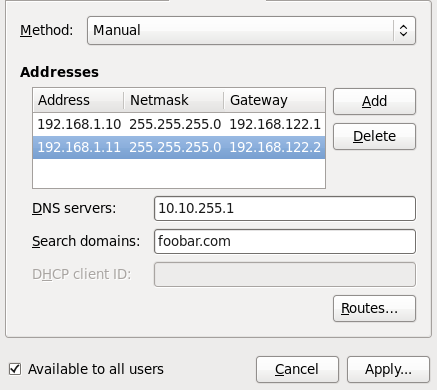

| IPv4 Settings | Section 5.4.9, “Configuring the IPv4 Settings Tab” | ||||

| IPv6 Settings | Section 5.4.10, “Configuring the IPv6 Settings Tab” | ||||

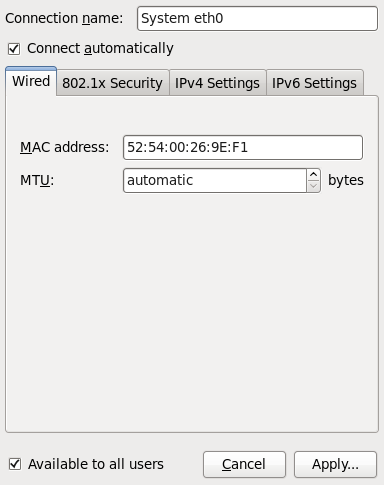

ifconfig command will show the MAC address associated with each interface: HWaddr 00:1C:25:14:4A:E0 for example.

1500 when using IPv4, or a variable number 1280 or higher for IPv6, and does not generally need to be specified or changed.

httpd if you are running a Web server). However, if you do not need to provide a service, you should turn it off to minimize your exposure to possible bug exploits.

xinetd and the services in the /etc/rc.d/init.d hierarchy (also known as SysV services) can be configured to start or stop using three different applications:

xinetd services can not be started, stopped, or restarted using this program.

chkconfig xinetd services can not be started, stopped, or restarted using this utility.

/etc/rc.d by hand or editing the xinetd configuration files in /etc/xinetd.d.

iptables to configure an IP firewall. If you are a new Linux user, note that iptables may not be the best solution for you. Setting up iptables can be complicated, and is best tackled by experienced Linux system administrators.

iptables is flexibility. For example, if you need a customized solution which provides certain hosts access to certain services, iptables can provide it for you. Refer to and for more information about iptables.

/etc/rc.d/rc<x>.d, where <x> is the number of the runlevel.

/etc/inittab file, which contains a line near the top of the file similar to the following:

id:5:initdefault:

xinetd (as well as any program with built-in support for libwrap) can use TCP wrappers to manage access. xinetd can use the /etc/hosts.allow and /etc/hosts.deny files to configure access to system services. As the names imply, hosts.allow contains a list of rules that allow clients to access the network services controlled by xinetd, and hosts.deny contains rules to deny access. The hosts.allow file takes precedence over the hosts.deny file. Permissions to grant or deny access can be based on individual IP address (or hostnames) or on a pattern of clients. Refer to hosts_access in section 5 of the man pages (man 5 hosts_access) for details.

xinetd xinetd, which is a secure replacement for inetd. The xinetd daemon conserves system resources, provides access control and logging, and can be used to start special-purpose servers. xinetd can also be used to grant or deny access to particular hosts, provide service access at specific times, limit the rate of incoming connections, limit the load created by connections, and more.

xinetd runs constantly and listens on all ports for the services it manages. When a connection request arrives for one of its managed services, xinetd starts up the appropriate server for that service.

xinetd is /etc/xinetd.conf, but the file only contains a few defaults and an instruction to include the /etc/xinetd.d directory. To enable or disable an xinetd service, edit its configuration file in the /etc/xinetd.d directory. If the disable attribute is set to yes, the service is disabled. If the disable attribute is set to no, the service is enabled. You can edit any of the xinetd configuration files or change its enabled status using the Services Configuration Tool, ntsysv, or chkconfig. For a list of network services controlled by xinetd, review the contents of the /etc/xinetd.d directory with the command ls /etc/xinetd.d.

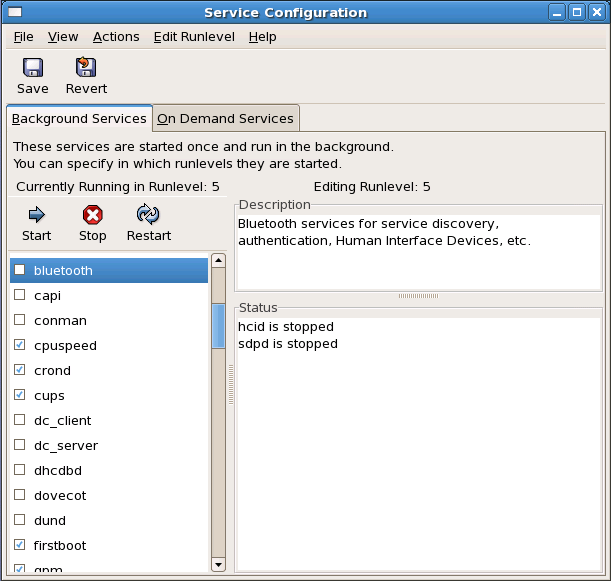

/etc/rc.d/init.d directory are started at boot time (for runlevels 3, 4, and 5) and which xinetd services are enabled. It also allows you to start, stop, and restart SysV services as well as reload xinetd.

system-config-services at a shell prompt (for example, in an XTerm or a GNOME terminal).

/etc/rc.d/init.d directory as well as the services controlled by xinetd. Click on the name of the service from the list on the left-hand side of the application to display a brief description of that service as well as the status of the service. If the service is not an xinetd service, the status window shows whether the service is currently running. If the service is controlled by xinetd, the status window displays the phrase xinetd service.

xinetd service, the action buttons are disabled because they cannot be started or stopped individually.

xinetd service by checking or unchecking the checkbox next to the service name, you must select > from the pulldown menu (or the button above the tabs) to reload xinetd and immediately enable/disable the xinetd service that you changed. xinetd is also configured to remember the setting. You can enable/disable multiple xinetd services at a time and save the changes when you are finished.

rsync to enable it in runlevel 3 and then save the changes. The rsync service is immediately enabled. The next time xinetd is started, rsync is still enabled.

xinetd services, xinetd is reloaded, and the changes take place immediately. When you save changes to other services, the runlevel is reconfigured, but the changes do not take effect immediately.

xinetd service to start at boot time for the currently selected runlevel, check the box beside the name of the service in the list. After configuring the runlevel, apply the changes by selecting > from the pulldown menu. The runlevel configuration is changed, but the runlevel is not restarted; thus, the changes do not take place immediately.

httpd service from checked to unchecked and then select , the runlevel 3 configuration changes so that httpd is not started at boot time. However, runlevel 3 is not reinitialized, so httpd is still running. Select one of following options at this point:

httpd service — Stop the service by selecting it from the list and clicking the button. A message appears stating that the service was stopped successfully.

telinit x (where x is the runlevel number; in this example, 3.). This option is recommended if you change the Start at Boot value of multiple services and want to activate the changes immediately.

httpd service. You can wait until the system is rebooted for the service to stop. The next time the system is booted, the runlevel is initialized without the httpd service running.

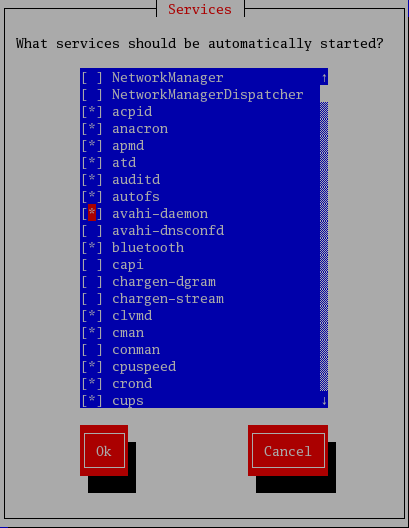

xinetd-managed service on or off. You can also use ntsysv to configure runlevels. By default, only the current runlevel is configured. To configure a different runlevel, specify one or more runlevels with the --level option. For example, the command ntsysv --level 345 configures runlevels 3, 4, and 5.

xinetd are immediately affected by ntsysv. For all other services, changes do not take effect immediately. You must stop or start the individual service with the command service <daemon> stop (where <daemon> is the name of the service you want to stop; for example, httpd). Replace stop with start or restart to start or restart the service.

chkconfig chkconfig command can also be used to activate and deactivate services. The chkconfig --list command displays a list of system services and whether they are started (on) or stopped (off) in runlevels 0-6. At the end of the list is a section for the services managed by xinetd.

chkconfig --list command is used to query a service managed by xinetd, it displays whether the xinetd service is enabled (on) or disabled (off). For example, the command chkconfig --list rsync returns the following output:

rsync on

rsync is enabled as an xinetd service. If xinetd is running, rsync is enabled.

chkconfig --list to query a service in /etc/rc.d, that service's settings for each runlevel are displayed. For example, the command chkconfig --list httpd returns the following output:

httpd 0:off 1:off 2:on 3:on 4:on 5:on 6:off

chkconfig can also be used to configure a service to be started (or not) in a specific runlevel. For example, to turn nscd off in runlevels 3, 4, and 5, use the following command:

chkconfig --level 345 nscd off

xinetd are immediately affected by chkconfig. For example, if xinetd is running while rsync is disabled, and the command chkconfig rsync on is executed, then rsync is immediately enabled without having to restart xinetd manually. Changes for other services do not take effect immediately after using chkconfig. You must stop or start the individual service with the command service <daemon> stop (where <daemon> is the name of the service you want to stop; for example, httpd). Replace stop with start or restart to start or restart the service.

ntsysv, chkconfig, xinetd, and xinetd.conf.

man 5 hosts_access — The man page for the format of host access control files (in section 5 of the man pages).

xinetd webpage. It contains sample configuration files and a more detailed list of features.

named in Fedora. You can manage it via the Services Configuration Tool (system-config-service).

bob.sales.example.com

. ”). In this example, therefore, com defines the top-level domain for this resource record. The name example is a sub-domain under com, while sales is a sub-domain under example. The name furthest to the left, bob, identifies a resource record which is part of the sales.example.com domain.

bob), each section is called a zone. Zone defines a specific namespace. A zone contains definitions of resource records, which usually contain host-to-IP address mappings and IP address-to-host mappings, which are called reverse records).

/usr/sbin/named, an administration utility called /usr/sbin/rndc and DNS debugging utility called /usr/bin/dig. More information about rndc can be found in Section 7.4, “Using rndc ”.

/etc/named.conf named daemon

/var/named/ directorynamed working directory which stores zone and statistic files

bind-chroot package, the BIND service will run in the /var/named/chroot environment. All configuration files will be moved there. As such, named.conf will be located in /var/named/chroot/etc/named.conf, and so on.

/etc/named.conf named.conf file is a collection of statements using nested options surrounded by opening and closing ellipse characters, { }. Administrators must be careful when editing named.conf to avoid syntax errors as many seemingly minor errors prevent the named service from starting.

named.conf file is organized similar to the following example:

<statement-1>["<statement-1-name>"] [<statement-1-class>] {<option-1>;<option-2>;<option-N>; };<statement-2>["<statement-2-name>"] [<statement-2-class>] {<option-1>;<option-2>;<option-N>; };<statement-N>["<statement-N-name>"] [<statement-N-class>] {<option-1>;<option-2>;<option-N>; };

/etc/named.conf:

acl Statementacl (Access Control List) statement defines groups of hosts which can then be permitted or denied access to the nameserver.

acl statement takes the following form:

acl<acl-name>{<match-element>; [<match-element>; ...] };

<acl-name> with the name of the access control list and replace <match-element> with a semi-colon separated list of IP addresses. Most of the time, an individual IP address or CIDR network notation (such as 10.0.1.0/24) is used to identify the IP addresses within the acl statement.

any — Matches every IP address

localhost — Matches any IP address in use by the local system

localnets — Matches any IP address on any network to which the local system is connected

none — Matches no IP addresses

options statement), acl statements can be very useful in preventing the misuse of a BIND nameserver.

options statement to define how they are treated by the nameserver:

acl black-hats {

10.0.2.0/24; 192.168.0.0/24; 1234:5678::9abc/24;};

acl red-hats { 10.0.1.0/24; };

options {

blackhole { black-hats; };

allow-query { red-hats; };

allow-query-cache { red-hats; };

}

black-hats and red-hats. Hosts in the black-hats list are denied access to the nameserver, while hosts in the red-hats list are given normal access.

include Statementinclude statement allows files to be included in a named.conf file. In this way, sensitive configuration data (such as keys) can be placed in a separate file with restrictive permissions.

include statement takes the following form:

include "<file-name>"

<file-name> is replaced with an absolute path to a file.

options Statementoptions statement defines global server configuration options and sets defaults for other statements. It can be used to specify the location of the named working directory, the types of queries allowed, and much more.

options statement takes the following form:

options {

<option>;

[<option>; ...]

};

<option> directives are replaced with a valid option.

allow-query allow-query-cache allow-query, this option applies to non-authoritative data, like recursive queries. By default, only localhost; and localnets; are allowed to obtain non-authoritative data.

blackhole none;

directory named working directory if different from the default value, /var/named/.

forwarders forward forwarders directive.

first — Specifies that the nameservers listed in the forwarders directive be queried before named attempts to resolve the name itself.

only — Specifies that named does not attempt name resolution itself in the event that queries to nameservers specified in the forwarders directive fail.

listen-on named listens for queries. By default, all IPv4 interfaces are used.

listen-on directive:

options { listen-on { 10.0.1.1; }; };

10.0.1.1) address.

listen-on-v6 listen-on except for IPv6 interfaces.

listen-on-v6 directive:

options { listen-on-v6 { 1234:5678::9abc; }; };

1234:5678::9abc) address.

max-cache-size options { max-cache-size 256M; };

notify named notifies the slave servers when a zone is updated. It accepts the following options:

yes — Notifies slave servers.

no — Does not notify slave servers.

master-only - Send notify only when server is a master server for the zone.

explicit — Only notifies slave servers specified in an also-notify list within a zone statement.

pid-file named.

recursion named acts as a recursive server. The default is yes.

options { recursion no; };

statistics-file named statistics are saved to the /var/named/named.stats file.

named.conf man page for more details.

zone Statementzone statement defines the characteristics of a zone, such as the location of its configuration file and zone-specific options. This statement can be used to override the global options statements.

zone statement takes the following form:

zone<zone-name><zone-class><zone-options>; [<zone-options>; ...] };

<zone-name> is the name of the zone, <zone-class> is the optional class of the zone, and <zone-options> is a list of options characterizing the zone.

<zone-name> attribute for the zone statement is particularly important. It is the default value assigned for the $ORIGIN directive used within the corresponding zone file located in the /var/named/ directory. The named daemon appends the name of the zone to any non-fully qualified domain name listed in the zone file.

zone statement defines the namespace for example.com, use example.com as the <zone-name> so it is placed at the end of hostnames within the example.com zone file.

zone statement options include the following:

allow-query allow-query option. The default is to allow all query requests.

allow-transfer allow-update file named working directory that contains the zone's configuration data.

masters type slave.

notify named notifies the slave servers when a zone is updated. This option has same parameters as a global notify parameter.

type delegation-only — Enforces the delegation status of infrastructure zones such as COM, NET, or ORG. Any answer that is received without an explicit or implicit delegation is treated as NXDOMAIN. This option is only applicable in TLDs or root zone files used in recursive or caching implementations.

forward — Forwards all requests for information about this zone to other nameservers.

hint — A special type of zone used to point to the root nameservers which resolve queries when a zone is not otherwise known. No configuration beyond the default is necessary with a hint zone.

master — Designates the nameserver as authoritative for this zone. A zone should be set as the master if the zone's configuration files reside on the system.

slave — Designates the nameserver as a slave server for this zone. Master server is specified in masters directive.

zone Statements/etc/named.conf file of a master or slave nameserver involves adding, modifying, or deleting zone statements. While these zone statements can contain many options, most nameservers require only a small subset to function efficiently. The following zone statements are very basic examples illustrating a master-slave nameserver relationship.

zone statement for the primary nameserver hosting example.com (192.168.0.1):

zone "example.com" IN {

type master;

file "example.com.zone";

allow-transfer { 192.168.0.2; };

};

example.com, the type is set to master, and the named service is instructed to read the /var/named/example.com.zone file. It also allows only slave nameserver (192.168.0.2) to transfer the zone.

zone statement for example.com is slightly different from the previous example. For a slave server, the type is set to slave and the masters directive is telling named the IP address of the master server.

zone statement for example.com zone:

zone "example.com"{

type slave;

file "slaves/example.com.zone";

masters { 192.168.0.1; };

};

zone statement configures named on the slave server to query the master server at the 192.168.0.1 IP address for information about the example.com zone. The information that the slave server receives from the master server is saved to the /var/named/slaves/example.com.zone file. Make sure you put all slave zones to /var/named/slaves directory otherwise named will fail to transfer the zone.

named.conf:

controls rndc command to administer the named service.

/etc/named.conf ” to learn more about how the controls statement is structured and what options are available.

key "<key-name>" rndc command. Two options are used with key:

algorithm <algorithm-name> — The type of algorithm used, such as hmac-md5.

secret "<key-value>" — The encrypted key.

/etc/rndc.conf ” for instructions on how to write a key statement.